Probabilistic Palm Rejection Using Spatiotemporal Touch Features and Iterative Classification

Tablet computers are often called upon to emulate classical pen-and-paper input. However, touchscreens typically lack the means to distinguish between legitimate touches with the palm or other parts of the hand. Users are then forced to rest their palms elsewhere or hover above the screen, resulting in ergonomic and usability problems.

We developed an algorithm for distinguishing unintentional (palm) touches from intentional (stylus/finger) touches. The algorithm uses machine learning to train decision trees that examine the evolution of basic touch properties (touch area, velocity, distance to other touches) over short time sequences. Implementations are available for both iOS and Android.

We compared our iOS implementation against two iOS applications: Bamboo Paper and Penultimate, and found that our algorithm outperformed both applications, reducing accidental palm inputs to 0.016 per pen stroke, while correctly passing 98% of stylus inputs. See our paper for details.

Palm rejection is a Qeexo technology. To arrange a demo, please email info@qeexo.com.

How it Works

To better understand the palm rejection algorithm, let's first take a look at what your device sees when the hand is down (Figure 2). Here the green dot is the intentional input, and the blue dots are unintentional palm inputs.

Several properties distinguishing intentional from unintentional inputs immediately jump out:

- Radius of the touch (now available for iOS 8!)

- Speed of touch motion (palms tend to stay put)

- Distance to other touches (palms are clumped together)

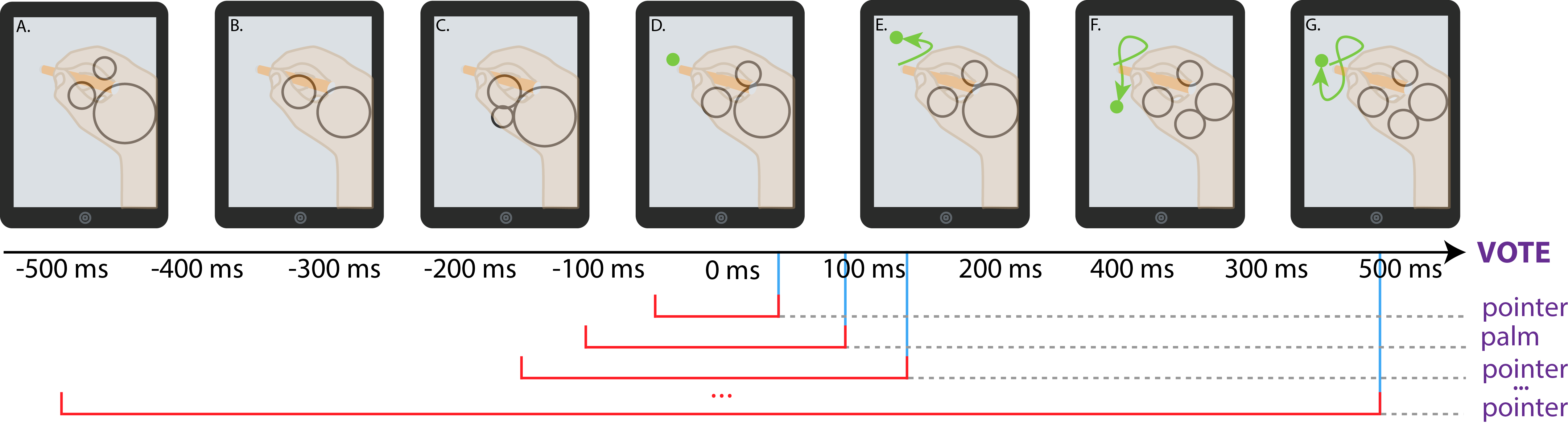

Rather than examining these properties instantaneously (on touch down) and performing immediate classification, our algorithm makes an initial guess, then refines this guess every 50 ms until 500 ms has elapsed, at which point a final decision is made by examining the votes at each 50ms interval.

At each time step t, we examine touch point behavior over a time window from -t to t, taking the mean, standard deviation, and range of the touch radius, touch velocity, and distance to other touches (Figure 2). These behavior metrics, or features are then fed into a previously trained decision tree and a classification (intentional or unintentional input) is made.

Performing regular classification has the benefit of providing a guess which can then be used to provide feedback to the user, which might then be altered. Figure 1 shows a video of our application demonstrating this behavior: palm touches are initially guessed as styluses and are later removed. Because in most cases the palm occludes these temporary guesses, the user is often unaware of this guessing behavior.

Source Code

Source code for the binary classifiers used and feature computation can be downloaded here.